Freshness Tiers Are the Missing Lever in Your Cloud Cost Strategy

Analytics | April 16, 2026

Stop Overpaying for Data Recency That Does Not Drive Decisions

Executive Summary

Enterprises overspend on cloud data platforms because freshness is applied uniformly instead of selectively. High-cost compute is consumed refreshing datasets that do not influence time-sensitive decisions.

By introducing freshness tiers aligned to business criticality, organizations can reduce unnecessary compute, simplify platform design, and improve cost visibility. This ensures infrastructure investment scales with decision impact rather than refresh frequency.

|

Freshness Without Context Is the Fastest Way to Inflate Cloud Costs

Perceptive Analytics Point of View

At Perceptive Analytics, we consistently see that cloud inefficiency is driven by unexamined refresh behavior rather than scale or tooling gaps. Platforms such as Snowflake and Google BigQuery provide elastic compute, but most enterprises do not control when that compute is activated.

Our recommendation is to treat freshness as a governed economic decision. When refresh frequency is tied to decision latency and enforced through metadata, organizations naturally shift compute toward high-impact use cases. Perceptive Analytics has observed that this not only reduces cost leakage but also improves clarity on which datasets truly influence business outcomes.

Where Compute Is Being Consumed Without Decision Impact

A typical enterprise setup reveals that refresh strategies are rarely aligned with actual usage patterns or decision urgency. Pipelines are scheduled based on legacy assumptions, and over time, these assumptions become embedded into system design. As explored in Perceptive Analytics’ guide on controlling cloud data costs without slowing insight velocity, the root cause is almost always behavioral rather than architectural.

Workload Type | Decision Dependency | Typical Refresh Pattern | Observed Inefficiency |

Revenue and inventory dashboards | Immediate | Hourly or near real-time | Appropriate |

Operational dashboards | Daily decisions | Multiple refreshes per day | Excess compute usage |

Exploratory analytics | Ad hoc usage | Daily scheduled refresh | Largely unused freshness |

This creates deeper structural issues than just cost. Compute is triggered regardless of whether data is consumed, leading to idle processing cycles that deliver no incremental business value. Low-priority workloads often compete with critical ones, increasing contention and reducing predictability. Engineering teams also spend time maintaining pipelines that have minimal decision relevance.

Studies confirm that a significant portion of analytics cloud spend is wasted due to such misalignment. The real issue is not the volume of data, but the lack of discipline in how freshness is applied across workloads. For a deeper look at how data pipeline design contributes to this, see event-driven versus scheduled data pipelines.

A Tiered Freshness Model That Forces Alignment With Business Reality

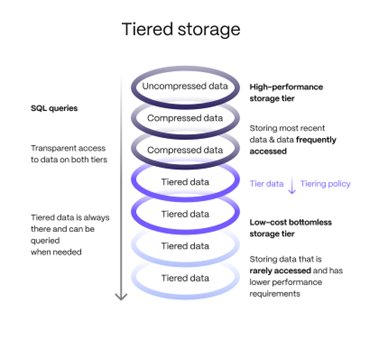

Organizations that successfully control costs redefine freshness as a business construct rather than a technical configuration. A structured three-tier model creates this alignment.

Tier 1: Decision-Critical Systems

Where delays directly impact revenue, risk, or operational continuity. These workloads demand consistent performance and are supported by premium, always-available compute environments.

Tier 2: Operational Analytics

Where decisions are time-bound but not immediate. These workloads benefit from scheduled refresh cycles and shared infrastructure that balances performance with cost efficiency. Organizations building out this tier often benefit from a well-governed modern data warehouse strategy to avoid the common reporting trap.

Tier 3: Exploratory Workloads

Where immediacy does not influence outcomes. These are best executed on serverless or orchestrated environments. Tools like dbt, Airflow, and Prefect are well-suited here. See Perceptive Analytics’ Airflow vs Prefect vs dbt orchestration guide for a framework on choosing the right tool for each tier.

What makes this model effective is not classification alone but the shift it creates. Freshness becomes a declared business requirement with accountability, forcing teams to justify why certain data needs to be updated more frequently. This eliminates inherited defaults and replaces them with intentional design.

Perceptive Analytics View: Freshness tiers work because they force a conversation that was never happening. When a team has to declare that a dataset is Tier 1 and justify the associated compute cost, most datasets quietly move to Tier 2 or Tier 3. That is where the savings come from. |

Embedding Freshness Into the Architecture, Not Governance Decks

The impact of freshness tiering depends on how deeply it is integrated into the platform. Organizations that succeed operationalize this through system-level controls rather than policy documents. This is the principle at the core of data observability as foundational infrastructure: governance is only effective when it is embedded in execution.

- Metadata tagging ensures every dataset and dashboard carries a defined freshness tier that directly drives execution behavior.

- Compute environments are pre-mapped to tiers, removing ambiguity in how workloads are processed.

- Query-level cost attribution highlights which dashboards consume disproportionate compute relative to usage.

This creates a system where cost governance is enforced automatically rather than reviewed retrospectively. It also introduces consistency across teams, reducing fragmentation in how pipelines are designed and executed. Why data integration strategy is critical for metadata and lineage offers a useful companion framework for understanding how metadata control shapes downstream cost behavior.

Over time, this architectural approach simplifies the data platform itself. Fewer unnecessary refresh cycles reduce orchestration complexity, lower failure rates, and improve overall system reliability.

When Freshness Has a Cost, Demand Becomes Rational

One of the most powerful shifts occurs when organizations introduce visibility into the cost of increasing freshness. Instead of allowing unrestricted escalation, they make it a conscious trade-off. Teams requesting higher refresh frequency are shown the incremental compute cost associated with that decision.

This encourages evaluation of whether increased recency actually improves outcomes or simply adds technical overhead. This mechanism changes behavior. Data consumers begin prioritizing decision relevance over perceived freshness, leading to more disciplined usage patterns.

In engagements where Perceptive Analytics introduced this transparency, unnecessary demand dropped without any enforcement. The same principle applies to dashboard design: see how answering strategic questions through high-impact dashboards changes the economics of what gets built and maintained.

Perceptive Analytics View: Visibility is a forcing function. When engineers can show a stakeholder the exact cost of moving a dataset from Tier 2 to Tier 1, the conversation shifts from ‘we need this faster’ to ‘is faster worth the cost?’ That shift alone recovers significant spend. |

Continuous Validation Keeps Freshness Grounded in Reality

Even well-designed models drift without ongoing validation. Over time, teams tend to overestimate the importance of freshness, leading to gradual tier inflation. Organizations must regularly evaluate how data is actually used.

This includes identifying datasets that are rarely accessed in real-time despite being assigned high-priority tiers, and reducing their refresh frequency accordingly. It also involves tracking whether faster refresh cycles are translating into faster or better decisions.

Without this feedback loop, freshness tiers become static and lose their effectiveness. With it, organizations maintain a system where compute allocation continuously reflects real business needs rather than outdated assumptions. For teams building this discipline, how automated data quality monitoring improved accuracy and trust across systems demonstrates what continuous validation looks like in a production environment.

Choosing the right data ownership model also underpins this validation work. Choosing data ownership based on decision impact provides a structured approach to assigning accountability in ways that support ongoing freshness governance.

Conclusion

Freshness tiers transform data platforms by aligning compute usage with decision urgency instead of technical defaults. This reduces unnecessary processing, improves system efficiency, and creates transparency in how infrastructure is consumed.

At Perceptive Analytics, we help enterprises design systems where freshness is intentional, governed, and continuously optimized. The opportunity is not just to reduce cost but to ensure every unit of compute contributes to a meaningful business decision. For organizations ready to take the next step, Perceptive Analytics’ data transformation maturity framework is a practical starting point for assessing where your platform stands today.

Perceptive Analytics can be your companion and guide in the journey to optimize your cloud costs, from initial diagnostic through to governed, continuously improving platform design. Our Snowflake consulting practice, Talend integration expertise, and advanced analytics consulting team work together to make freshness a business-governed capability rather than a technical afterthought.

Studies confirm that a significant portion of analytics cloud spend is wasted due to such misalignment. The real issue is not the volume of data, but the lack of discipline in how freshness is applied across workloads. For a deeper look at how data pipeline design contributes to this, see event-driven versus scheduled data pipelines.

A Tiered Freshness Model That Forces Alignment With Business Reality

Organizations that successfully control costs redefine freshness as a business construct rather than a technical configuration. A structured three-tier model creates this alignment.

Tier 1: Decision-Critical Systems

Where delays directly impact revenue, risk, or operational continuity. These workloads demand consistent performance and are supported by premium, always-available compute environments.

Tier 2: Operational Analytics

Where decisions are time-bound but not immediate. These workloads benefit from scheduled refresh cycles and shared infrastructure that balances performance with cost efficiency. Organizations building out this tier often benefit from a well-governed modern data warehouse strategy to avoid the common reporting trap.

Tier 3: Exploratory Workloads

Where immediacy does not influence outcomes. These are best executed on serverless or orchestrated environments. Tools like dbt, Airflow, and Prefect are well-suited here. See Perceptive Analytics’ Airflow vs Prefect vs dbt orchestration guide for a framework on choosing the right tool for each tier.

What makes this model effective is not classification alone but the shift it creates. Freshness becomes a declared business requirement with accountability, forcing teams to justify why certain data needs to be updated more frequently. This eliminates inherited defaults and replaces them with intentional design.

Perceptive Analytics View: Freshness tiers work because they force a conversation that was never happening. When a team has to declare that a dataset is Tier 1 and justify the associated compute cost, most datasets quietly move to Tier 2 or Tier 3. That is where the savings come from. |

Embedding Freshness Into the Architecture, Not Governance Decks

The impact of freshness tiering depends on how deeply it is integrated into the platform. Organizations that succeed operationalize this through system-level controls rather than policy documents. This is the principle at the core of data observability as foundational infrastructure: governance is only effective when it is embedded in execution.

- Metadata tagging ensures every dataset and dashboard carries a defined freshness tier that directly drives execution behavior.

- Compute environments are pre-mapped to tiers, removing ambiguity in how workloads are processed.

- Query-level cost attribution highlights which dashboards consume disproportionate compute relative to usage.

This creates a system where cost governance is enforced automatically rather than reviewed retrospectively. It also introduces consistency across teams, reducing fragmentation in how pipelines are designed and executed. Why data integration strategy is critical for metadata and lineage offers a useful companion framework for understanding how metadata control shapes downstream cost behavior.

Over time, this architectural approach simplifies the data platform itself. Fewer unnecessary refresh cycles reduce orchestration complexity, lower failure rates, and improve overall system reliability.

When Freshness Has a Cost, Demand Becomes Rational

One of the most powerful shifts occurs when organizations introduce visibility into the cost of increasing freshness. Instead of allowing unrestricted escalation, they make it a conscious trade-off. Teams requesting higher refresh frequency are shown the incremental compute cost associated with that decision.

This encourages evaluation of whether increased recency actually improves outcomes or simply adds technical overhead. This mechanism changes behavior. Data consumers begin prioritizing decision relevance over perceived freshness, leading to more disciplined usage patterns.

In engagements where Perceptive Analytics introduced this transparency, unnecessary demand dropped without any enforcement. The same principle applies to dashboard design: see how answering strategic questions through high-impact dashboards changes the economics of what gets built and maintained.

Perceptive Analytics View: Visibility is a forcing function. When engineers can show a stakeholder the exact cost of moving a dataset from Tier 2 to Tier 1, the conversation shifts from ‘we need this faster’ to ‘is faster worth the cost?’ That shift alone recovers significant spend. |

Continuous Validation Keeps Freshness Grounded in Reality

Even well-designed models drift without ongoing validation. Over time, teams tend to overestimate the importance of freshness, leading to gradual tier inflation. Organizations must regularly evaluate how data is actually used.

This includes identifying datasets that are rarely accessed in real-time despite being assigned high-priority tiers, and reducing their refresh frequency accordingly. It also involves tracking whether faster refresh cycles are translating into faster or better decisions.

Without this feedback loop, freshness tiers become static and lose their effectiveness. With it, organizations maintain a system where compute allocation continuously reflects real business needs rather than outdated assumptions. For teams building this discipline, how automated data quality monitoring improved accuracy and trust across systems demonstrates what continuous validation looks like in a production environment.

Choosing the right data ownership model also underpins this validation work. Choosing data ownership based on decision impact provides a structured approach to assigning accountability in ways that support ongoing freshness governance.

Conclusion

Freshness tiers transform data platforms by aligning compute usage with decision urgency instead of technical defaults. This reduces unnecessary processing, improves system efficiency, and creates transparency in how infrastructure is consumed.

At Perceptive Analytics, we help enterprises design systems where freshness is intentional, governed, and continuously optimized. The opportunity is not just to reduce cost but to ensure every unit of compute contributes to a meaningful business decision. For organizations ready to take the next step, Perceptive Analytics’ data transformation maturity framework is a practical starting point for assessing where your platform stands today.

Perceptive Analytics can be your companion and guide in the journey to optimize your cloud costs, from initial diagnostic through to governed, continuously improving platform design. Our Snowflake consulting practice, Talend integration expertise, and advanced analytics consulting team work together to make freshness a business-governed capability rather than a technical afterthought.

|

Frequently Asked Questions

What are freshness tiers in cloud data platforms?

Freshness tiers are a structured way to classify datasets based on how frequently they need to be updated, depending on the urgency and impact of the decisions they support. Instead of applying uniform refresh schedules, organizations assign Tier 1 (real-time), Tier 2 (scheduled), and Tier 3 (on-demand) refresh strategies aligned to business criticality.

Why do enterprises overspend on data freshness?

Overspending typically occurs when data refresh frequency is applied uniformly across all workloads, regardless of their business importance. This leads to high compute usage for datasets that are rarely used in time-sensitive decisions, creating unnecessary cloud costs without improving outcomes.

How do freshness tiers reduce cloud costs?

Freshness tiers reduce costs by aligning compute usage with actual decision needs. High-cost, real-time compute is reserved for critical workloads, while less important datasets are refreshed less frequently or on-demand. This minimizes idle processing and ensures infrastructure investment is tied to business value.

How can organizations implement freshness tiers effectively?

Successful implementation requires embedding freshness into the data architecture. This includes tagging datasets with freshness metadata, mapping compute resources to tiers, and enabling query-level cost tracking. The goal is to enforce refresh behavior automatically rather than relying on manual governance.

What is the role of cost visibility in managing data freshness?

Cost visibility helps teams understand the trade-offs between data recency and compute spend. When users see the incremental cost of increasing refresh frequency, they are more likely to prioritize decision impact over unnecessary freshness, leading to more disciplined and efficient usage.

How often should freshness tiers be reviewed and updated?

Freshness tiers should be reviewed regularly as part of ongoing data platform governance. Usage patterns, business priorities, and decision timelines evolve over time, so periodic validation ensures that refresh strategies remain aligned with actual needs and prevent tier inflation.