Your Data Platform Is Overspending on Compute

Analytics | April 8, 2026

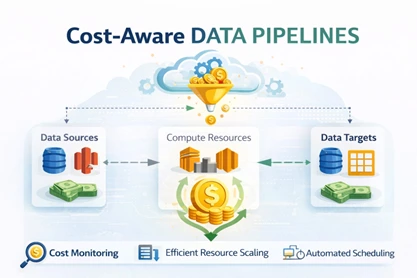

Turning Pipeline Orchestration into a Cost-Governance Mechanism

Executive Summary

The average enterprise wastes 20-30% of its analytics cloud budget. The cause is almost never inefficient tooling, and almost always architectural: platforms that treat a regulatory reporting pipeline and an exploratory model as identical workloads deserve identical infrastructure.

This architectural neutrality toward business value quietly inflates cloud spending without improving decision outcomes. Cost-aware pipeline design solves this by aligning compute allocation with business criticality. By introducing workload tiers and enforcing them through orchestration policies, organizations ensure high-impact decisions receive reliable infrastructure while exploratory analytics run on flexible, lower-cost compute.

The result is a data platform where infrastructure spending scales with decision value rather than pipeline volume.

Talk with our analytics experts today- Book a free 30-min consultation session

Why Cloud Cost Problems Start in Pipeline Design: A Perceptive Analytics POV

Perceptive Analytics recommends shifting cloud optimization from tools to economics. With Gartner projecting over 60% of workloads will support analytics by 2027, and studies finding 20–30% of spend is wasted, the real issue is lack of business context not capability.

What we see is simple: when every pipeline is treated equally, infrastructure scales without reflecting value. We recommend embedding business criticality into pipeline orchestration so high-impact workloads are prioritized and lower-value ones are optimized for cost—ensuring cloud spend aligns directly with business outcomes.

Explore more: Answering Strategic Questions Through High-Impact Dashboards

Why Do Data Pipelines Consume Premium Compute Regardless of Business Value?

Most platforms treat pipelines as technical processes rather than decision-support systems. As analytics adoption grows, infrastructure begins supporting workloads with very different business importance on identical compute environments. This creates predictable cost inefficiencies:

Regulatory reporting pipelines compete with exploratory analytics for compute capacity.

Experimental workloads run on production-grade infrastructure.

Uniform refresh schedules trigger unnecessary compute usage.

Peak infrastructure scaling is driven by low-priority workloads.

Quick Diagnostic: Pipeline Treatment Mismatch

| Pipeline Type | Business Impact | Typical Infrastructure Treatment |

|---|---|---|

| Financial and regulatory reporting | Compliance and executive decision making | Shared compute cluster |

| Operational dashboards | Daily operational monitoring | Shared compute cluster |

| Data science experimentation | Exploration and prototyping | Shared compute cluster |

Research from McKinsey indicates that analytics infrastructure costs frequently grow two to three times faster than actual decision usage when workload prioritization mechanisms are absent. The platform scales technically, but it does not scale economically.

Explore more: Snowflake vs BigQuery: Which is Better for the Growth Stage?

What Happens When Compute Allocation Reflects Business Criticality?

Cost-aware data platforms introduce a pipeline criticality framework that aligns infrastructure behavior with decision impact. At Perceptive Analytics, workloads are categorized based on the consequences of delay, failure, or latency. Typical enterprise classification models include:

Workload Tiers

Tier 1: Financial reporting, regulatory disclosures, and executive performance metrics that require maximum reliability and predictable performance.

Tier 2: Operational dashboards and departmental analytics that require timely refresh cycles but tolerate moderate scheduling flexibility.

Tier 3: Experimental analytics, data science exploration, and early-stage modeling where cost efficiency is more important than guaranteed performance.

Once pipelines are tagged with these tiers, orchestration systems can enforce compute discipline through infrastructure policies. Organizations implementing this model commonly introduce:

Workload isolation: Separate compute clusters or warehouses ensure experimental workloads do not compete with critical reporting pipelines.

Priority-based orchestration: Job schedulers allocate compute resources preferentially to Tier 1 workloads during resource contention.

Elastic scaling policies: Operational workloads scale dynamically while critical pipelines run on stable infrastructure environments.

Preemptible or spot compute for experimentation: Exploratory workloads run on discounted compute resources that may be interrupted during peak demand.

Google Cloud reports that spot compute can reduce processing costs by up to 70 percent for non-critical workloads, making this approach highly effective for experimental analytics environments.

Read more: BigQuery vs Redshift: Choose the Right Cloud Data Warehouse

Three Questions for CXOs

To operationalize this shift, challenge your teams with these questions this week:

Are our most business-critical pipelines guaranteed priority access to compute during peak demand?

Which workloads today are running on premium infrastructure without corresponding business impact?

Do we have a clear tiering and orchestration policy that links compute spend directly to decision value?

Can Scheduling Strategy Reduce Infrastructure Costs Without Reducing Workloads?

Infrastructure optimization is often approached through compute sizing rather than execution timing. Yet many pipelines run during peak infrastructure hours even when their outputs are not immediately consumed. Cost-aware orchestration introduces time-based workload routing aligned with decision urgency.

Time-Based Workload Routing

| Time Window | Typical Workload |

|---|---|

| Business hours | Tier 1 regulatory and executive pipelines |

| Extended hours | Tier 2 operational analytics |

| Off-peak windows | Tier 3 experimental workloads |

This scheduling strategy delivers structural benefits:

Peak infrastructure demand declines.

Compute contention between pipelines decreases.

Critical reporting pipelines achieve predictable performance.

Exploratory workloads run on lower-cost infrastructure.

FinOps Foundation studies show organizations implementing intelligent workload scheduling frequently reduce analytics compute costs by 15 to 25 percent without reducing workload volume.

Conclusion

Cloud spending escalates when infrastructure treats every pipeline as equally important. Cost-aware pipeline architectures solve this by aligning compute allocation with business criticality.

By embedding workload tiers, priority orchestration, and intelligent scheduling into platform design, organizations shift cost control from reactive monitoring to architectural governance. At Perceptive Analytics, we help you operationalize this shift—designing business-aware data platforms where infrastructure automatically prioritizes what matters most, ensuring your cloud spend scales with value, not just volume.

Book a free 30-min consultation session with our analytics experts today!