Metadata That Predicts Failure: How LLM Copilots Are Rewriting Change Intelligence

AI | April 22, 2026

Transforming lineage, usage, and schema history into real-time impact prediction for safer data platform evolution.

Executive Summary

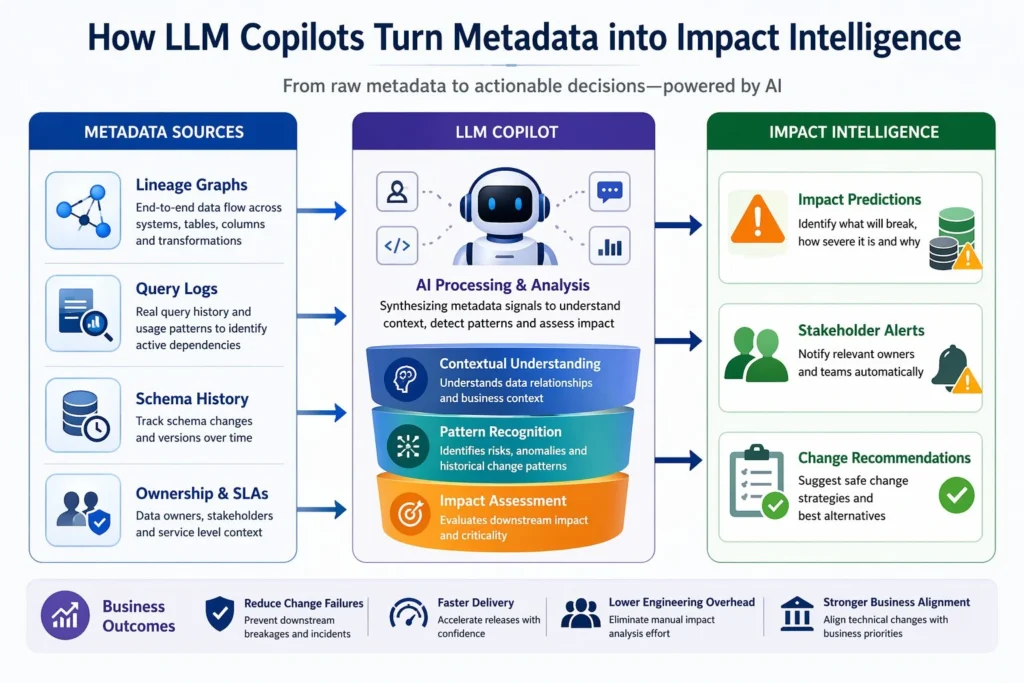

LLMs are transforming metadata into a predictive decision system that evaluates downstream impact before deployment. This depends on column-level lineage, query intelligence, and continuously refreshed metadata pipelines working together. Organizations that operationalize this capability reduce change failure rates, engineering dependency, and incident costs, while accelerating release velocity. The shift is from manual lineage interpretation to AI-driven, explainable impact prediction embedded directly into engineering workflows.

Metadata as a Control Layer

A Perceptive Analytics POV

At Perceptive Analytics, we observe that most enterprises have lineage and catalogs in place but lack decision intelligence at the point of change. Engineers still depend on manual reasoning to assess impact, creating delays and risk.

LLMs convert fragmented metadata into real-time predictions, but only when metadata is complete, granular, and continuously governed. Organizations that operationalize this capability move toward system-driven decision-making where every change is evaluated before execution.

Talk with our consultants today. Book a session with our experts now.

Why Impact Analysis Breaks Despite Lineage Investments

Most systems fail not because lineage is absent, but because it is not designed for decision-making. Lineage shows relationships, but it does not quantify risk, usage criticality, or business impact.

The core gaps are structural:

- Lineage is captured at table level, while failures occur at the column level

- Query usage is not integrated, so active dependencies are indistinguishable from unused ones

- Metadata becomes outdated quickly, especially in dynamic pipelines

- Insights are not embedded in workflows, forcing manual interpretation

This results in delayed deployments, unexpected downstream failures, and dependence on a small set of experts. According to Gartner 2025, poor metadata management remains a primary reason why data governance programs fail to deliver measurable outcomes. Our article on why data integration strategy is critical for metadata and lineage explains why lineage must be designed for decision-making from the start, not retrofitted after a governance failure surfaces.

What LLM Copilots Actually Enable

LLM copilots introduce a new interaction model where metadata becomes queryable intelligence. Instead of navigating tools, teams can directly ask what will break, who is affected, and how to proceed safely.

They enable:

- Impact prediction that identifies affected pipelines, dashboards, and models

- Business translation that connects data changes to revenue or operational impact

- Stakeholder mapping to identify owners of impacted assets

- Change recommendations based on historical schema evolution

- Faster root cause analysis when failures occur

A 2024 McKinsey study shows that AI-assisted engineering workflows improve productivity significantly, with the highest gains in analysis-heavy tasks like debugging and impact assessment, where metadata copilots play a central role. Our AI consulting practice helps enterprises design and deploy exactly this kind of LLM-powered intelligence layer on top of their existing metadata infrastructure.

Case Study: Preventing Revenue Misreporting with Predictive Metadata

A global payments organization managing high-volume transactions faced repeated inconsistencies in financial reporting whenever schema updates were introduced. Lineage tools existed, but impact analysis required manual tracing across multiple pipelines and dashboards.

After implementing column-level lineage integrated with query logs and exposed through an LLM interface, the organization transformed its change workflow. A proposed modification to a transaction field was instantly analyzed across all dependencies. The system identified its impact on revenue recognition logic used by finance teams and recommended a controlled rollout strategy with backward compatibility.

The result was a 70 to 80 percent reduction in impact analysis time, elimination of reporting discrepancies, and improved alignment between engineering and finance teams. This demonstrates that LLM copilots help prevent failures rather than just respond to them. Our case study on automated data quality monitoring improving accuracy and trust across systems shows a comparable pattern of trust improvement achieved through automated validation at the pipeline layer.

The Metadata Foundation Required for LLM Accuracy

| Layer | Purpose | Why It Matters |

|---|---|---|

| Central Metadata Repository | Captures datasets, ownership, SLAs | Provides structured context for LLM reasoning |

| Column-Level Lineage | Tracks transformations across systems | Enables precise impact prediction |

| Query Logs | Records actual usage patterns | Identifies critical dependencies |

| Schema History | Tracks changes and versions | Supports predictive recommendations |

| Governance Layer | Controls access and visibility | Ensures trust and compliance |

Without this foundation, LLM outputs remain generic. With it, they become accurate, contextual, and decision-ready. At Perceptive Analytics, we consistently see that metadata maturity directly determines LLM effectiveness. Our article on data observability as foundational infrastructure covers how the monitoring layer that feeds this metadata foundation must be built into the pipeline architecture, not added as a reporting afterthought.

For organizations building this foundation on a modern cloud warehouse, our Snowflake consulting practice specifically includes column-level lineage and schema history as part of the platform design, ensuring the metadata layer is LLM-ready from the start.

How to Operationalize LLM-Based Impact Intelligence

Deploying this capability requires integrating it into how teams already work, rather than introducing another standalone system.

A practical approach includes:

1. Create a unified metadata access layer to eliminate blind spots in decision-making. Ensures leaders and engineers operate on one consistent view of dependencies, reducing misaligned changes and duplicated effort.

2. Automate lineage capture to enable real-time impact visibility. Allows teams to detect risks instantly as systems evolve, instead of relying on outdated or manual analysis. Our article on event-driven vs. scheduled data pipelines explains when event-driven propagation is the right mechanism for keeping lineage continuously current rather than batch-refreshed.

3. Standardize column-level lineage to reduce failure risk at the point of change. Improves precision of impact analysis, preventing high-cost downstream breakages in critical business systems.

4. Integrate query intelligence to prioritize what truly matters to the business. Focuses attention on actively used and revenue-impacting data assets, avoiding wasted effort on low-impact dependencies.

5. Embed LLM copilots into development workflows to accelerate safe decision-making. Enables engineers to assess impact within seconds during development, significantly reducing release delays and approval cycles. Our advanced analytics consultants design these workflow integrations as part of a broader platform engineering engagement, ensuring adoption is built in rather than bolted on.

Organizations that implement this model see faster release cycles, reduced incident rates, and stronger collaboration across teams.

FAQs: What CXOs Should Clarify Before Investing

Are we solving for visibility or decision-making?

Most organizations achieve visibility. The real value lies in decision intelligence at the point of change. Catalogs and lineage tools give you a map. LLM copilots give you a navigator.

Do we have sufficient lineage granularity?

Without column-level lineage, predictions will lack accuracy. Table-level lineage is a starting point, not a foundation for impact intelligence.

Is usage data integrated into metadata systems?

Without query intelligence, it is difficult to identify which dependencies matter most. A dependency that has not been queried in six months carries very different risk than one powering an executive dashboard updated daily.

Will teams actually use this capability?

Adoption depends on embedding insights directly into existing engineering workflows, not deploying a standalone tool that requires context switching. Our article on one architecture: from data fragmentation to AI performance explains how workflow integration, not capability breadth, is what determines whether an AI layer gets used in practice.

What is the risk of poor metadata quality feeding the LLM?

Garbage in, garbage out applies directly here. An LLM reasoning over incomplete or stale metadata will generate confident-sounding but inaccurate impact assessments, which is potentially worse than no assessment at all. Metadata quality must be an engineering SLA, not a documentation exercise.

Conclusion

LLMs are redefining metadata as a predictive control layer for data platforms, enabling organizations to anticipate risk and execute changes with confidence. The advantage lies in reducing uncertainty while increasing speed and alignment with business outcomes.

Perceptive Analytics helps enterprises build LLM-powered metadata intelligence layers that make impact analysis instant and reliable. The next step is to evaluate your metadata readiness and partner with Perceptive Analytics to operationalize predictive, AI-driven data change management at scale.

Ready to turn your metadata into a predictive control layer that prevents failures before they happen? Talk with our consultants today. Book a session with our experts now.

Frequently Asked Questions

How does an LLM copilot differ from existing lineage visualization tools?

Lineage tools show relationships and you still interpret what they mean. An LLM copilot translates those relationships into plain-language answers: what will break, who owns the affected assets, and what the safest deployment path looks like. The difference is between a map and a navigator.

What's the risk of trusting LLM predictions for high-stakes schema changes?

The risk is directly tied to metadata quality. With incomplete or stale metadata, predictions lose accuracy. Organizations should treat LLM outputs as decision support, with high-confidence guidance for routine changes and human review retained for critical or novel scenarios until trust is established.

How do we keep metadata fresh enough for the LLM to remain accurate?

Automated lineage capture and pipeline-integrated metadata updates are essential. Metadata that depends on manual documentation quickly becomes outdated. Treating metadata as a live, governed asset with ownership, refresh SLAs, and automated testing is what keeps LLM predictions reliable.

How do we build organizational trust in AI-generated impact assessments?

Start with lower-risk changes where predictions can be validated against known outcomes. Track accuracy over time and surface it transparently. As the system demonstrates reliability, confidence builds naturally. Embedding explanations rather than just predictions into the copilot output also helps engineers and stakeholders understand the reasoning rather than treating it as a black box.