The End of Request-Driven BI: Why Data Platforms Are Taking Over

Analytics | April 22, 2026

Why shifting from request-driven analytics to data platforms is now a structural necessity for enterprises.

Executive Summary

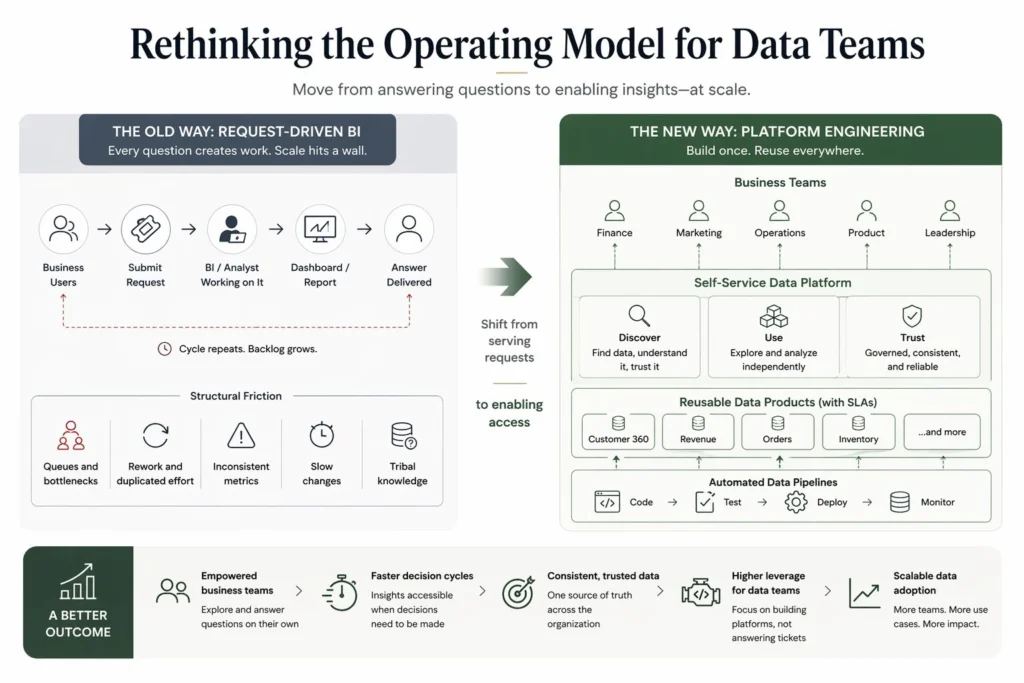

Traditional BI teams operate as request-driven service units, where every business question depends on analyst bandwidth, creating decision delays, duplicated logic, and limited scalability. As demand grows, this model becomes a bottleneck. Platform engineering shifts data teams toward building self-service platforms, reusable data products with SLAs, and automated governance, enabling business teams to access and use data independently. This transition drives 3 to 5x higher output from the same team, faster decision cycles, and consistent enterprise-wide metrics, making it a critical shift for scaling data-driven organizations.

When Data Becomes a Product, Decision-Making Starts Scaling

A Perceptive Analytics POV

In our experience with a diverse set of client teams, we consistently see that BI teams struggle not because of capability gaps, but because of the request-response operating model. Every dashboard, metric change, or new question enters a queue, creating delays that compound as demand grows across the business. Organizations that scale move away from this model and build internal data platforms where datasets are discoverable, governed, and reusable without intervention. Data teams shift from answering questions to designing systems that answer them by default, through data products, automated pipelines, and self-service access. This reduces dependency on analysts and enables decisions to happen closer to the business, at the speed required to compete.

Talk with our consultants today. Book a session with our experts now.

The Structural Bottleneck: Why Every BI Request Adds Friction Instead of Value

- Every incremental question requires engineering effort, even when the data already exists

- Dashboards are built for narrow use cases, limiting reuse and forcing repeated development

- Analysts act as intermediaries, translating business needs instead of enabling direct access

- Backlogs grow non-linearly, as demand increases across multiple teams

- Metric definitions diverge, as similar logic is recreated across reports and pipelines

- Decision-making slows down, as even simple changes require prioritization and development cycles

This creates a compounding effect where data volume increases, but decision velocity declines.

From a CXO perspective, this is not just inefficiency. It is a direct constraint on growth and responsiveness. McKinsey highlights that organizations embedding data into workflows outperform peers significantly, but only when data is accessible without friction.

The root issue is structural. BI teams are designed to serve requests, not to scale access. As long as every interaction with data requires mediation, adding more tools or people will only delay the problem. We have seen that increasing team size temporarily reduces backlog, but demand quickly catches up. The only sustainable solution is to eliminate dependency on the team for every question. Our article on static pipelines as an enterprise liability explains why rigid, request-dependent architectures structurally prevent this kind of scale.

What Platform Engineering Changes in Data Teams

| Capability | Traditional BI Team | Platform Engineering Model |

|---|---|---|

| Access Model | Request-based (tickets, dashboards) | Self-service via internal platforms |

| Core Output | Reports and dashboards | Reusable data products with SLAs |

| Dependency | High dependency on analysts | Minimal dependency, guided self-service |

| Data Discovery | Tribal knowledge or static documentation | Internal data portal with metadata and lineage |

| Change Management | Manual validation and updates | Automated testing and CI/CD pipelines |

Platform engineering introduces a product mindset for data. Instead of building a dashboard for “revenue by region,” teams build a revenue data product that already supports slicing by region, time, channel, and customer segment. This allows business users to answer multiple questions without creating new requests.

At Perceptive Analytics, organizations adopting this model consistently achieve significant gains in productivity, consistency, and scalability, without increasing team size. Our data engineering consulting practice is specifically structured around building this platform layer, not just individual pipelines or dashboards.

A Practical Model to Build a Self-Service Data Platform

To move from a request-driven BI model to a scalable platform, organizations need to implement four core capabilities.

Internal Developer Portal for Data: A centralized platform where users can discover datasets, understand definitions, view lineage, and access data independently. This removes dependency on analysts and increases data adoption. Our article on data observability as foundational infrastructure covers how metadata management and lineage tracking are the foundation that makes this portal trustworthy enough for business users to rely on.

Reusable Data Products with SLAs: Datasets such as customer master, revenue, or operations metrics are treated as products with defined ownership, refresh frequency, and performance guarantees, ensuring reliability and reuse across teams. Our article on why data integration strategy is critical for metadata and lineage explains why lineage is what makes an SLA on a data product enforceable and auditable.

Automated Testing and CI/CD for Data Pipelines: Data pipelines are tested, version-controlled, and automatically deployed, with rollback mechanisms to prevent failures. This reduces manual effort and improves trust in data. Our article on how automated data quality monitoring improved accuracy and trust across systems shows what this automated validation layer produces in a production environment.

Documentation as Code: Metadata, lineage, and definitions are maintained in version-controlled systems and updated automatically, ensuring consistency and transparency across the organization.

For teams implementing this self-service layer on top of a Snowflake or cloud warehouse foundation, the semantic layer is typically built and governed through transformation tooling that enforces consistent definitions across every consuming team.

What Changes When Business Teams No Longer Wait for Data

- Business teams independently explore and analyze data, reducing reliance on BI teams

- Decision cycles shrink significantly, enabling faster responses to market changes

- Data consistency improves, as all teams use standardized data products

- Engineering effort shifts to high-impact work, such as building new capabilities

- Data adoption increases across the organization, driving measurable business outcomes

This shift has direct business impact. Faster and more reliable access to data enables better pricing strategies, improved operational efficiency, and stronger customer engagement. Organizations that adopt platform engineering consistently see higher alignment between data and business outcomes, faster execution, and improved ROI from data investments. Our article on 5 ways to make analytics faster maps the specific interventions that accelerate this transition most quickly. For the visualization layer sitting on top of this platform, our Tableau consulting and Power BI consulting practices are built specifically around governed, self-service delivery models rather than static report development.

FAQs: Real CXO Questions When Moving from BI Teams to Data Platforms

“Our business teams are heavily dependent on BI. How do we reduce this without disrupting operations?”

At Perceptive Analytics, we typically start by identifying high-impact datasets such as customer or revenue and converting them into governed data products with self-service access. This allows gradual transition, where dependency reduces without affecting ongoing reporting. Our article on data transformation maturity and choosing the right framework provides a structured way to prioritize which datasets to productize first.

“We already have dashboards. Why is that not enough?”

Dashboards answer predefined questions, but businesses need the ability to explore new questions dynamically. We help organizations move from static dashboards to flexible data products that support multiple use cases without rebuilding logic.

“How do we ensure data quality if business users access data directly?”

We implement automated testing, validation layers, and SLAs within data pipelines, ensuring that self-service access is built on trusted and governed data. This improves quality compared to manual validation processes.

“What does success look like after this transformation?”

In our experience at Perceptive Analytics, success is when business teams no longer wait for data, analysts focus on strategic problems, and the same data assets are reused across multiple functions without duplication. Our article on the CXO role in BI strategy and adoption defines what this looks like from an executive sponsorship and measurement standpoint.

Conclusion

The shift from BI teams to data platforms is not an optimization. It is a structural reset of how decisions are made at scale. Organizations that stay in a request-driven model will face increasing decision latency, rising costs, and fragmented data trust, regardless of investment in tools or talent. Platform engineering turns data into a scalable, reusable, and governed capability, removing dependency on team bandwidth. The advantage is not just efficiency, but the ability to respond faster and compete more effectively.

At Perceptive Analytics, we help enterprises build data platforms that scale decision-making itself. The real question is not whether to adopt this model, but how long your current BI structure can keep up with the decisions your business demands.

Ready to move from a request-driven BI model to a scalable data platform? Talk with our consultants today. Book a session with our experts now.

Frequently Asked Questions

How do we know if our BI team has become a bottleneck?

Common signs include growing request backlogs, the same data being rebuilt across multiple dashboards, business teams maintaining their own shadow spreadsheets, and frequent complaints that reports are never quite right. If demand consistently outpaces delivery, the model itself is the constraint.

What's the difference between a dashboard and a data product?

A dashboard answers a predefined question for a specific audience. A data product is a governed, reusable dataset such as a customer master or revenue model that supports many questions across many teams without needing to be rebuilt each time.

How do we prevent data quality from dropping when business users access data directly?

Quality is maintained through the platform, not through analyst oversight. Automated testing, defined SLAs, and validation layers built into pipelines ensure that what’s self-served is already trustworthy and often more reliable than manually assembled reports.

What skills does a data team need to shift toward platform engineering?

Beyond technical skills like pipeline development and data modeling, teams need product thinking: the ability to define users, use cases, and reliability standards for data assets. Soft skills like stakeholder communication and documentation discipline become more important, not less.

How long does this transition typically take before teams see meaningful change?

Early impact such as reduced backlog pressure and faster access to common datasets often appears within a few months when high-priority data products are prioritized first. Full organizational adoption takes longer, but results compound as more assets are productized and self-service expands.